Speedata’s APU to Be Deployed in Nebul’s European Sovereign Cloud

22 February, 2026

A strategic partnership will enable organizations across the European Union to run Apache Spark, big data, and AI workloads on dedicated hardware — while maintaining full data sovereignty.

Israeli chip startup Speedata has announced a strategic collaboration with European cloud provider Nebul, under which Speedata’s Analytics Processing Unit (APU) will be deployed for the first time within a sovereign cloud infrastructure in the European Union.

The move will allow European organizations to run large-scale big data and artificial intelligence workloads in the cloud without relinquishing full control over their data within EU borders.

Under the agreement, Nebul will become the first cloud provider to offer Speedata’s APU as part of its private NeoCloud platform. The infrastructure is designed for organizations with strict regulatory requirements, emphasizing not only compliance with GDPR and the EU AI Act, but also European ownership structures, operational control, and restricted external access to data.

At the core of the partnership is the integration of Speedata’s accelerator card into Nebul’s data centers across Europe. In practical terms, this means cloud customers will be able to run heavy data-processing workloads — most notably Apache Spark — on specialized hardware built specifically for analytics.

Spark has become a de facto standard engine in enterprise big data environments. It powers massive SQL queries, joins across billions of records, aggregations, and distributed processing at scale. It sits behind many data warehouses, analytics platforms, and AI pipelines.

Beyond Spark, the platform will support ETL (Extract, Transform, Load) processes — pulling data from multiple sources, cleaning and structuring it, and loading it into centralized repositories. This stage is critical before any advanced analytics or model training can begin.

The system is also designed to accelerate data preparation for AI training, as well as real-time RAG (Retrieval-Augmented Generation) queries, where large language models access structured enterprise data to generate context-aware responses.

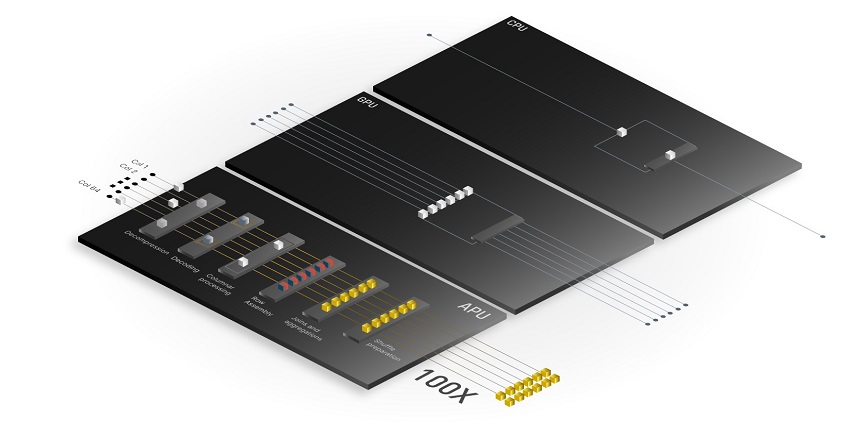

In many such scenarios, the bottleneck is not GPU compute power but the data-processing stage itself. That is where Speedata’s APU comes in. The chip is designed to execute complex analytical operations — such as joins and aggregations — directly in hardware, reducing latency and significantly lowering the number of servers required.

The AI Data Bottleneck

Founded in 2019, Speedata develops a purpose-built chip known as the Analytics Processing Unit. Unlike general-purpose CPUs or GPUs, the APU was architected from the ground up for analytics and big data workloads, with a particular focus on accelerating Apache Spark SQL.

Rather than routing query execution through multiple layers of software, memory transfers, and inter-server communication, the APU performs much of the computation directly in silicon. According to the company, this architecture enables significant performance gains while dramatically reducing server count and energy consumption. In one reported deployment, an APU-based system replaced dozens of servers with just a handful, sharply cutting operating costs.

Speedata positions itself as addressing a critical challenge in modern AI infrastructure: the data layer that feeds model training and inference — a layer that often determines overall cost and time-to-value.

Nebul is a European sovereign cloud provider focused on private cloud environments for AI and high-performance computing workloads. The company primarily serves large enterprises and public-sector organizations seeking to avoid reliance on U.S. hyperscalers while maintaining full legal and operational control over infrastructure and data.

Nebul’s cloud infrastructure is built on European data centers, with an emphasis on regulatory compliance, cybersecurity, and strict adherence to EU standards. It already offers advanced GPU infrastructure for AI training and inference, and now adds a dedicated analytics acceleration layer to its stack — expanding its capabilities from compute to data processing.

The European Context: Sovereignty as Strategy

The partnership comes amid a sharp rise in AI computing demand across Europe over the past year. Organizations are scaling infrastructure to process growing volumes of data, while simultaneously navigating complex jurisdictional questions around data ownership, operational control, and exposure to non-EU legislation.

In Europe, data sovereignty extends beyond the physical location of servers. It encompasses ownership structures, operational governance, and who ultimately has legal access to information. Against this backdrop, combining a purpose-built analytics chip with a European sovereign cloud infrastructure offers a dual value proposition: higher performance for big data and AI workloads, alongside regulatory clarity and control.

On its face, this appears to be one of the first public deployments of Speedata’s technology within a commercial European sovereign cloud. If expanded, it could mark a transition for the company from technology validation toward deeper market penetration through strategic partnerships.

For Nebul, integrating a dedicated analytics accelerator may serve as a differentiator in a competitive European cloud market where performance increasingly matters as much as sovereignty.