NVIDIA yesterday unveiled, for the first time, the full architecture of its Vera Rubin platform at GTC — the company’s next-generation AI infrastructure designed for the era of agentic AI. Unlike previous generations built around discrete servers, Rubin is presented as a complete rack-scale system, where multiple specialized racks operate together as what NVIDIA describes as an “AI factory.”

The architecture consists of several types of racks, each responsible for a different layer of the system. GPU racks, powered by Rubin processors, handle the most compute-intensive workloads — training large models and running real-time inference. CPU racks manage environments, agents and orchestration logic. Storage racks handle memory and large-scale context management, while networking racks connect the system through high-speed interconnects. In addition, dedicated inference accelerators are integrated to optimize response generation.

The CPU Returns to Center Stage

One of the most notable innovations in the architecture is the Vera CPU rack — a dedicated system designed to address the emerging workloads of autonomous AI agents.

In traditional AI systems, GPUs handled most of the heavy lifting, while CPUs played a supporting role. In the agentic era, however, a growing share of the workload shifts to the CPU: running code, invoking tools, orchestrating workflows, validating outputs and managing simulations.

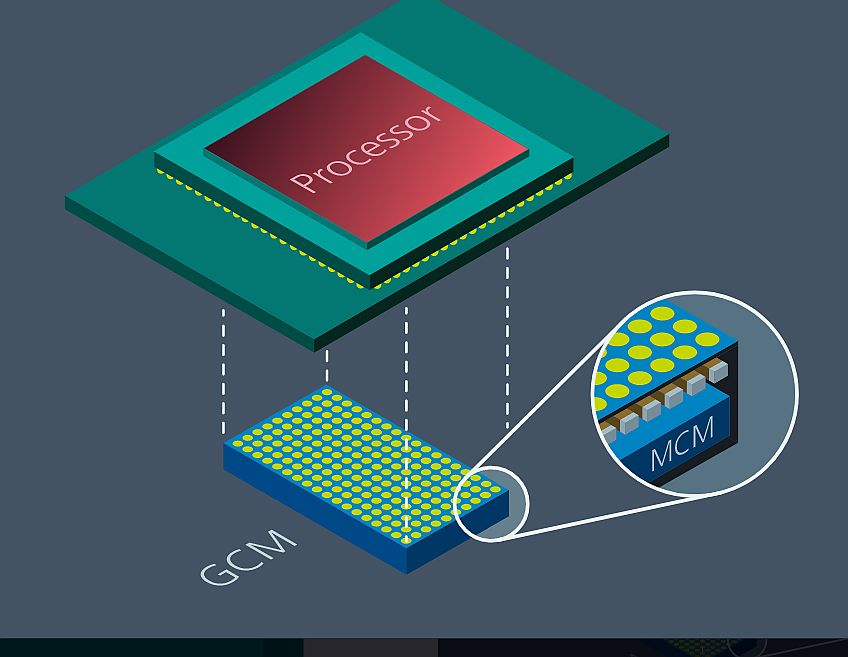

According to NVIDIA, each CPU rack can include up to 256 Vera processors, capable of running tens of thousands of independent CPU environments in parallel. The processor itself features 88 custom-designed cores, with a strong emphasis on single-thread performance and high memory bandwidth — reaching up to 1.2 TB/s — alongside significant gains in energy efficiency.

A key architectural element is the direct connection between CPU and GPU via NVLink, enabling far faster data exchange than traditional server interconnects. As a result, the CPU is no longer just coordinating GPU workloads — it becomes an integral part of the computation pipeline itself.

Meanwhile, Intel Remains — But in a Reduced Role

At the same time, Intel announced that its Xeon 6 processors have once again been selected as the host CPU for NVIDIA’s DGX Rubin NVL8 systems — servers equipped with eight GPUs that serve as a fundamental building block of the platform.

In these systems, Xeon continues to perform its traditional role: managing GPUs, scheduling workloads and handling data movement. Its strengths remain in large memory capacity, high I/O bandwidth and broad compatibility with existing infrastructure.

However, within the broader Rubin architecture, this role is becoming increasingly limited.

From Blackwell to Rubin: A Shift in Power

The transition from Blackwell to Rubin clearly illustrates the shift. In previous generations, AI infrastructure was largely built around DGX or HGX servers, each combining NVIDIA GPUs with CPUs — typically from Intel. In practice, Xeon processors were present in nearly every system.

With Rubin, the architecture evolves. Instead of uniform servers, the system becomes a heterogeneous, rack-scale infrastructure, where each component is purpose-built. The CPU takes on a more central role — but not necessarily through Intel.

The Vera CPU rack now serves as the layer responsible for running agents and orchestrating the system, while Xeon processors are largely confined to host roles within NVL8 systems. In other words, Intel remains inside the system — but no longer defines its foundation.

The Big Picture: Vertical Integration of AI Infrastructure

The broader picture is clear: NVIDIA is steadily moving toward full-stack control of AI infrastructure — from GPUs to CPUs, networking and storage.

While its partnership with Intel continues, NVIDIA is simultaneously building an internal alternative that strengthens its long-term position. Where the CPU once represented a critical external dependency, it is now becoming another layer under NVIDIA’s own control.

For the data center market, this marks a fundamental shift: from general-purpose servers to AI-native infrastructure — where even the CPU is purpose-built for the agentic era. The result could redefine the balance of power among chipmakers in the years ahead.