By: Rita Horner, Program Manager at the Solutions Group, Synopsys

Super-fast data transfer and efficient data processing form the backbone enabling a wide array of modern applications based on hyperscale data centers. Demand for insatiable bandwidth continues unabated and Ethernet speeds have reached 400G/800G bits per second, driven in large part by hyperscalers. These high data rates take a toll on server application processors. This is where network interface cards (NICs) come in. NICs support communication between computers in a system by converting data packages to signals that are spread throughout a network.

Thanks to their programmability and hardware acceleration capabilities, SmartNICs bring flexibility and efficiency to data center networking, storage, and security. By offloading various routine tasks, SmartNICs free host server CPUs to focus on core application processing functions. This article, originally published in the “From Silicon to Software” blog, examines how SmartNICs are supporting our increasingly digital world, emerging data center infrastructure trends, and electronic design automation (EDA) and IP technologies that can help you keep up with the evolution.

Understanding the Intelligence of SmartNICs

As network speeds increased from 1G to 10G to 112G SerDes and Ethernet speeds from 25G to 100G to 200G/400G and, now, 800G, the thinking about hardware architecture has shifted. A peek inside a traditional data center architecture would reveal CPUs, memory, storage, and network components. In recent years, a consensus has formed around the thought that general-purpose CPUs are no longer the best place to run infrastructure functions. A lot of overhead is required to support functions like hypervisors, routing, and load balancing, as well as IO-intensive security functions like deep packet inspection and data storage encryption/decryption.

Some hyperscalers have estimated that around half of CPU cycles are consumed by non-revenue-generating infrastructure tasks. SmartNICs can take on much of the heavy lifting, freeing CPUs to focus on revenue-generating application processing. The intelligence of SmartNICs comes from their programmability, along with their hardware acceleration capabilities. Bringing together wired networking and computational resources on a single card, SmartNICs feature their own on-board processor, accelerators with a custom ASIC implementation, or an FPGA and high-speed memory and IOs.

Integrating intelligence into these networking components has evolved to accommodate changes in data center bandwidth requirements. Initially, there was a focus on offloading infrastructure functions away from the host server CPU. Then, platform functions like cloud technologies were offloaded, from hypervisors to virtual machines, containers, and then microservices. Now, we’re seeing demands for application acceleration; for example, the speeding up of tasks such as video transcoding, encryption/decryption, and packet processing. CPUs can’t support the packet processing demands at today’s highest line rates, so offloading to programmable hardware, in the form of SmartNICs, makes sense.

Data Center Infrastructure Trends

With many potential use cases, what’s the right mix of components for a SmartNIC? Well, there really isn’t a one-size-fits-all approach. Data processing units are at the heart of SmartNICs, and can contain components for programmable compute, network protocol management, security, and storage. For some data centers, a few processor cores are perfect because they’re mainly used for virtual machine management. For others, more than a dozen processor cores are needed to run, say, an entire Linux operating system instance. Looking ahead, here are a few data center infrastructure trends to consider to ensure that your SmartNICs will serve you well into the future as networking traffic continues to grow:

- The infrastructure of the future for SmartNICs is a disaggregated one, based on four types of die or chiplets: a CPU subsystem, IO subsystem, accelerator ASIC, or FPGA and optionally integrated memory, such as high-bandwidth memory (HBM). Disaggregated dies, or chiplets, support power and area goals while providing the flexibility and product modularity to address different needs in a single package. By comparison, a monolithic approach results in a large, complex chip that comes with yield and time-to-market risks as well as high costs.

- In a disaggregated die approach, high-speed connectivity between the components is essential to ensure smooth and fast data transfer. High bandwidth, power efficiency, and low latency are key criteria to meet. Universal Chiplet Interconnect Express (UCIe) is emerging as an answer.

- For main deployment of SmartNICs in every server, the hardware needs to be integrated seamlessly in an open-standard software stack and should be able to run an open network operating system (NOS). Ideally, infrastructure functions are deployed as pre-built containers with APIs that plug into the rest of the software stack layers.

Data center architectures are continuing to evolve to meet ever-increasing bandwidth demands. As such, reliability, security, and interoperability of their IP blocks remain critical for SmartNICs, given their important role in the smooth flow of data traffic. This is where Synopsys can help, with our comprehensive portfolio of advanced IP at different process nodes along with our broad array of multi-die design and verification solutions.

On the IP side, we offer:

- Die-to-die interfaces including 112G XSR PHY and controllers

- ARC processors for networking applications

- Foundation IP including low-latency embedded memories with standard and ultra-low leakage logic libraries

- Memory interfaces including DDR and HBM PHYs and Controllers

- Standards-based security IP including hardware secure modules with root of trust, interface security modules, cryptography, and security protocol accelerators

- Accelerators including DSP

- Cache coherent expansion including CCIX/CXL controllers, inline AES cryptography, and PCI Express® PHY and Controller

- Network interfaces including Ethernet Controllers and PHYs for speeds up to 800G.

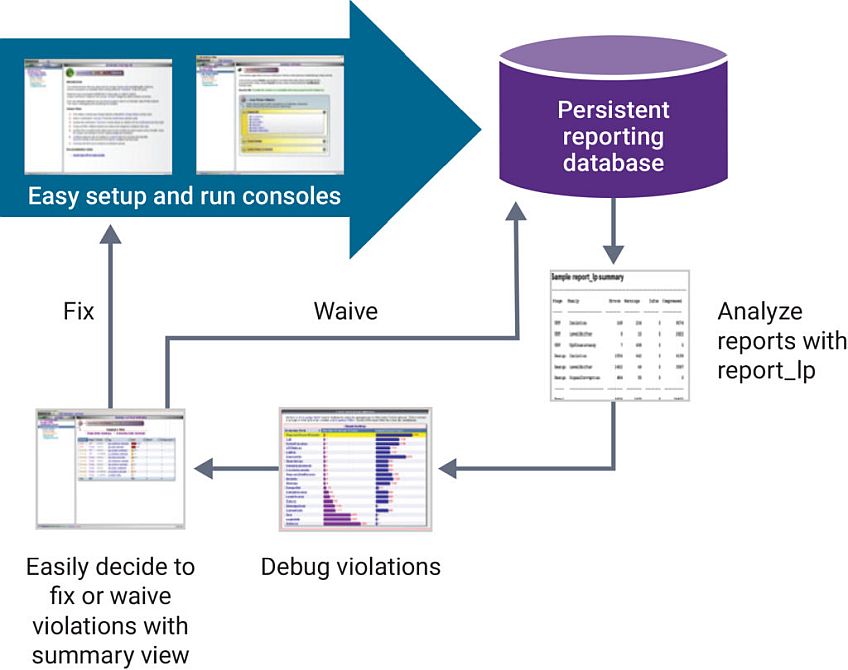

On the design and verification side, we offer technologies to accelerate the development of multi-die designs, such as our comprehensive and integrated 3DIC solution, which encompasses architectural planning, silicon engineering, 3D system design, verification, test, co-packaged optics, silicon lifecycle management, signoff/system analysis, and IP. In addition, our virtual prototyping tools can help you determine parameters such as the right mix of processor cores and the ideal accelerator for your design. We have design services support, too, to assist with SmartNIC design and/or IP integration and verification.

Summary

Our digital world revolves around high volumes of complex data. To ensure an array of swift and seamless transactions online and in the cloud, data center architectures are moving to a composable model, where homogeneous networking, storage, and compute resources are connected via pluggable optical modules (and co-packaged optics merging electronic and photonic components in the future). In this environment, SmartNICs take the load off of primary compute resources, allowing them to focus on core application processing. While NICs have been around since the mid-1980s, their increased intelligence has made them indispensable for today’s hyperscale data centers. Successful mainstream deployment depends on integration of multi-vendor hardware into industry-standard, open-source software stacks.